Cheap Intelligence Makes Accountability Expensive

The strange thing about better AI is that it may not reduce the need for human judgment. It may multiply it.

Once agents are useful, companies will not use them sparingly. They will use them everywhere: to draft customer emails, update tickets, flag churn risk, summarize sales calls, recommend code changes, escalate support issues, and prepare decisions. Each action will be cheaper, but each consequential action will still need someone to stand behind it.

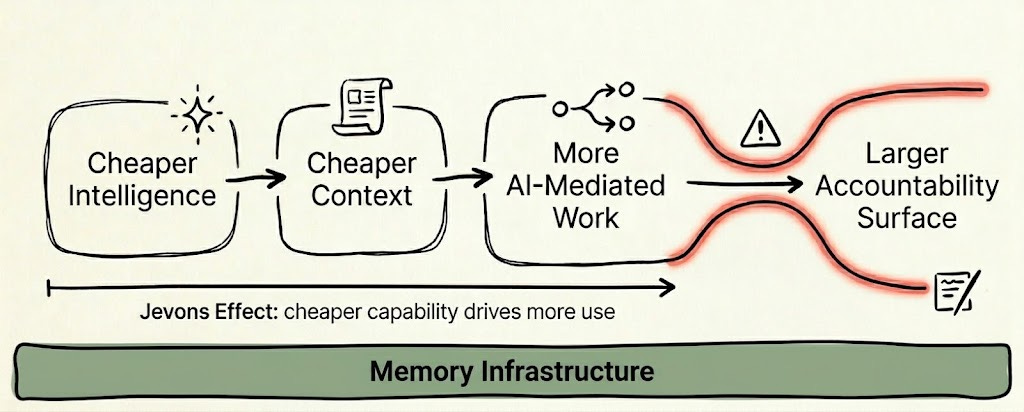

That is where the bottleneck moves: intelligence gets cheaper, context gets cheaper, but accountability does not. This is the Jevons effect hiding inside enterprise AI.

You can see the problem before invoking any theory. Imagine an AI system performs 1,000 consequential actions with a 5 percent error rate. That produces 50 errors. Now imagine the model improves and the error rate falls to 1 percent, but the company runs 100,000 AI-mediated actions because the system is finally useful. The error rate improved, yet the organization is now dealing with 1,000 errors.

The model got better while the liability surface got larger. This is why “how accurate is the model?” is not enough as an enterprise question. The harder question is who becomes responsible when the model is wrong, and what context that person had when they approved, ignored, or delegated the action.

Most of the AI conversation assumes intelligence is the scarce resource. That will be directionally right for a while. Better models will make more work possible, and every jump in capability expands what people are willing to delegate. Inside a company, though, intelligence by itself is not enough. A very smart system with the wrong context will still produce the wrong answer, and a very smart system whose provider does not own the outcome cannot act on anything consequential without a human or organization accepting responsibility.

This applies whether the intelligence comes from OpenAI, Anthropic, another model provider, or an internal agent team. The model can draft the email, update the ticket, recommend the code change, flag the churn risk, or write the customer follow-up. But once the action matters, someone inside the company has to own the decision. Someone has to say: “I understand the basis for this action, and I am prepared to stand behind it.” That person may be a manager, lawyer, operator, engineer, account owner, or executive. It will not be whoever supplied the intelligence unless that provider is willing to absorb the consequences of being wrong.

Jevons Paradox gives us the shape of the problem. William Stanley Jevons observed that making steam engines more efficient did not reduce coal consumption; it made coal-powered work cheaper, which expanded coal use across the economy (NPR). The simple version is familiar now: when a resource becomes cheaper to use, total usage can rise instead of fall. For AI, the question is not whether usage rises. The question is what becomes the new bottleneck.

The spreadsheet is a better analogy than coal. Spreadsheets did not eliminate financial judgment. They made modeling cheap enough that companies demanded more modeling, more scenarios, and more justification before decisions. Before VisiCalc, changing assumptions in a financial model meant manually recalculating the model and waiting for the next version. Afterward, managers could ask “what if” questions, test assumptions, and quickly analyze effects on expenses, sales, profits, investment, inventory, and budgets before acting. Fast recalculation encouraged more experimentation and more fine-tuning of business plans (EBSCO).

The important part is what happened next. When the cost of a model collapsed, companies asked for more scenarios, forecasts, variance explanations, sensitivity tables, board decks, and justification before decisions. The spreadsheet removed some arithmetic work, but it created more demand for people who could interpret the model, defend the assumptions, and decide what to do.

Computers did something similar to intellectual labor more broadly. A Bureau of Labor Statistics report says computers reduced demand for routine work while increasing demand or compensation for non-routine analytical and interpersonal work (BLS). Making the mechanical part easier often increases demand for the judgment layer above it.

Company context will follow the same pattern. Today, companies ration context because reconstructing it is expensive. A customer success leader will not investigate every weak churn signal because stitching together calls, tickets, Slack threads, and CRM notes takes time. A product leader will not trace every roadmap disagreement back through customer conversations and old decisions. A CEO cannot inspect every early sign of execution drift without turning the company into a reporting machine.

The raw material exists, but it is scattered across artifacts that were never designed to explain themselves together: a customer promise in a call, a workaround buried in Slack, an official status in Salesforce, and a decision from a meeting. The follow-up may have become a ticket, or it may have disappeared. Organizations quietly decide that many questions are too expensive to ask.

A company brain changes those economics. If the system can assemble account history, customer promises, unresolved blockers, ownership changes, prior decisions, source evidence, and current state in minutes, the number of worthwhile investigations goes up. The same people who previously could not justify the time will suddenly want the context, because the cost has fallen below the threshold where asking becomes rational.

Demand shows up in concrete places. Sales will pull context before smaller renewals as well as the giant enterprise deals. Customer success will look for risk traces across the whole book. Product will want live customer evidence during roadmap debates rather than a quarterly synthesis that has already flattened the nuance. Engineering will want decision and failure context before ordinary risky changes. Leadership will want weak signals before the metric moves, because after the metric moves the company is already late.

Agents make the rebound stronger. Once they can draft, route, investigate, escalate, and recommend with company context, AI-mediated work stops being a small set of demos and becomes part of the operating surface of the company. Support escalations can arrive with account history; sales follow-ups can carry prior promises; product briefs can include customer evidence; ticket updates can be checked against commitments. Each action may be cheaper, but the total number of actions rises.

The Jevons effect for organizational intelligence is simple: when context gets cheaper, companies will use more context. They will spend the savings by asking more questions, checking more decisions, and expecting more evidence around work that used to proceed on partial information.

This turns company brains into accountability infrastructure, not just productivity infrastructure. A surfaced customer promise forces a human choice: honor it, reject it, or renegotiate it. A contradiction between roadmap plans and sales commitments forces a decision about which truth wins. A drafted customer email still needs someone to stand behind the factual claims, the commitments, and the tone.

The AI can prepare the field, but it cannot absorb organizational responsibility unless the intelligence provider is willing to take the liability. I do not see that happening soon. Until it does, the responsibility flows back into the company, usually to a manager, owner, reviewer, operator, lawyer, account lead, engineer, or executive who has to decide what the company is prepared to stand behind.

This is where company memory changes what the organization can reasonably claim it did not know. When context was expensive, ignorance was often operationally acceptable: missed commitments hid in meeting transcripts, bad assumptions lived in old planning docs, and customer risks sat across three systems without anyone seeing the whole pattern. When context becomes cheap, the standard changes. If the system could have shown the promise, the risk, the contradiction, or the stale decision, the natural question becomes: who saw it, who ignored it, and who owned the next action?

E-discovery is the closest enterprise analogy because it shows what happens when finding evidence becomes easier. Automation reduced some of the burden of finding, filtering, and organizing large volumes of documents, while also raising expectations around defensibility. JD Supra notes that automated e-discovery systems can maintain chain-of-custody records, track decision rationales, and demonstrate process integrity under judicial scrutiny (JD Supra).

The lesson is uncomfortable: once evidence becomes easier to find, people expect you to find it. Once process can be tracked, people expect you to defend it. Once context becomes cheap, “we did not know” becomes a weaker excuse.

None of this means the system should surface everything to everyone. A company brain that broadcasts every weak signal across the organization will drown it in noise and compliance nightmares. The structural fix is more than intelligent synthesis; it requires strict provenance and an access-controlled organizational memory.

A useful system must show what changed, the exact source evidence, and who is authorized to see and resolve it. Some signals become tasks, others remain watch items. The hard part is ensuring the right context flows only to the specific human whose judgment the company is prepared to stand behind.

People often miss this part of Jevons Paradox in AI. The rebound is usage, expectation, review, traceability, and responsibility arriving together. When intelligence gets cheaper, context becomes the constraint. When context gets cheaper, liability becomes the constraint.

The enterprise winners will not be the companies that automate the most decisions. They will be the companies that know which decisions can be delegated, which ones require human ownership, and what evidence each person is standing behind. That is why the company brain is more than a productivity layer. It is the memory infrastructure that lets an AI-mediated organization delegate work without losing accountability.

—

At Sentra, where we are building what can be only described as a “company brain”, a shared intelligence/memory layer that sits on all communication channels, knowledge bases, action and agent traces to understand how everyone in an organization actually works as well as how work actually gets done, constructing a living world model of the entire company in near real time.

Cool insight